- H.A.I.R - AI in HR

- Posts

- Some excellent events coming up

Some excellent events coming up

Wherever you are in the world, there are some great opportunities to learn more about AI Governance right now.

Hello H.A.I.R. Community,

As most of you will know, I recently took up a new role at Warden AI.

And while I’ve not been able to focus on H.A.I.R as much as I would like, my new role also allows me to create further opportunities to help educate the Staffing, Recruitment and HR world on AI Governance.

To this end, I wanted to share three things with you; 2 webinars and an online resource.

California: Operationalizing the CCPA Automated Decisionmaking (ADMT) rules

While the industry is distracted by Generative AI hype, a massive regulatory shift is quietly happening. On Thursday, March 5th, I'm hosting an exclusive webinar to unpack California's new CCPA ADMT rules (effective January 2026). I'll be joined by Liisa Thomas (Lead Privacy Partner, Sheppard Mullin) and Ram Gudavalli (CTO, Sense) for a frank "Law vs. Code" debate. We are skipping the dry legal checklists and diving straight into the operational reality for recruiters and staffing firms: how to audit your "black box" vendors, handle candidate opt-outs without creating a black hole, and define the exact line between automated "logistics" and regulated "decisions." If your firm processes high volumes of candidates using AI, VMS, or matching algorithms, this is the briefing you need.

Talent Acquisition in an Agentic Age

For years, AI in talent acquisition has been framed around speed and efficiency. But the real differentiator going forward will be responsibility.

As regulatory pressure increases and scrutiny around bias grows, TA leaders are asking tougher questions about transparency, explainability, and compliance.

I’ll be joining Madeline Laurano (Aptitude Research) and Ritu Mohanka (VONQ), where we will explore how Agentic AI can support structured decision-making while maintaining recruiter control. We will discuss how scoring models can be made visible, auditable, and aligned with fairness principles, and crucially, what responsible AI in hiring truly looks like.

📆: 10 March 2026

🕜: 3 - 4pm CET | 2 - 3pm GMT | 10 - 11am EST

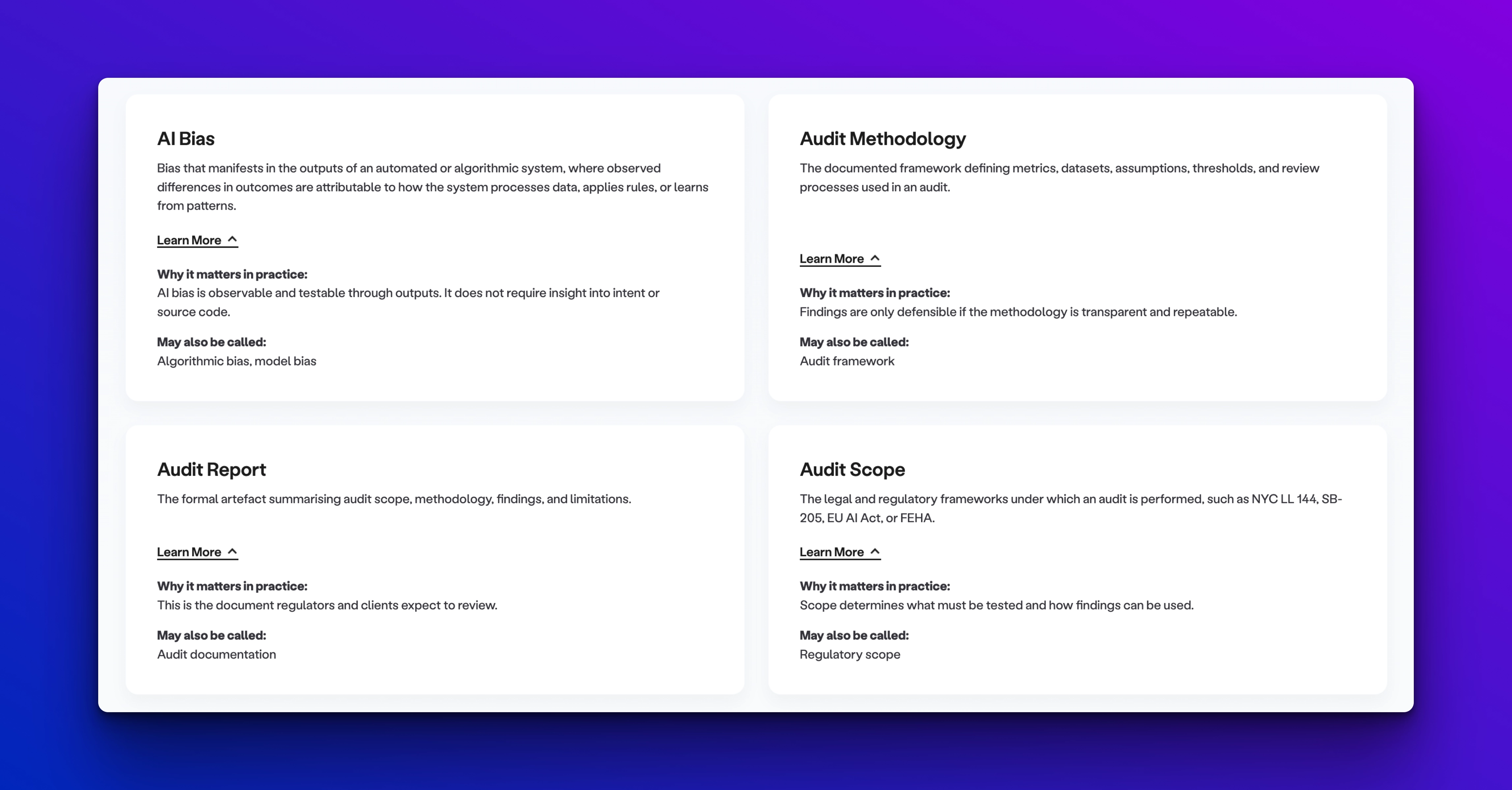

The Warden Lexicon of AI Bias Auditing

Bias auditing is becoming a baseline expectation in HR tech procurement, regulatory compliance, and enterprise AI risk management. But the conversations around it still lack a shared vocabulary.

Terms like automated decisionmaking technology, disparate impact, proxy bias, pre-deployment testing, post-deployment monitoring, and third-party audit appear constantly in sales decks, policies, audit reports, and regulatory guidance, often meaning slightly different things depending on who is using them.

That ambiguity creates risk. It also makes meaningful oversight harder than it needs to be. That inconsistency is a real problem.

When terminology is used loosely, it becomes genuinely difficult to understand what was actually tested, what audit findings mean in practice, or whether a given system even falls under regulatory scrutiny.

So we built something to help the industry: the Lexicon of AI Bias Auditing, a plain English reference guide to the core terminology used in fairness and risk evaluation for AI hiring systems. The goal is to make meaningful oversight easier by giving practitioners, procurement teams, and regulators a common starting point.

Thank you for being part of H.A.I.R. I can’t wait to show you the next iteration of this is.

Until next time,

Head of Responsible AI & Industry Engagement

Warden AI

Reply